A New Method for Stable Software: Micro Code Reviews for the AI Era

Why AI-generated code needs lightweight verification, and how micro code reviews with git-lrc can improve software stability and developer comprehension.

If you prefer watching a video version of this article, check out:

Why This AI Moment Changes How We Build Software

I have been into software for almost a decade now. I grew up with software, using software, building software, being enthusiastic about software. I thought it was the most magical thing. I still do.

When I was in college, I read The Society of Mind by Marvin Minsky. It was a very famous book, at least in some research circles. I was enthralled by it. I loved that book and reread it many times.

So this AI revolution is nothing unexpected to someone like me. I’m absolutely for it.

But in terms of practical details, Minsky wasn’t exactly right. He initially did a lot to popularize neural networks, but later he moved toward other approaches and felt neural networks were not workable. With hindsight, we can now say neural networks were probably a very good idea, because modern deep learning is fundamentally based on neural models. And today, compute is what is making things happen for us.

We have Rich Sutton’s “Bitter Lesson,” where he argued that what matters most is computation, not handcrafted methods. For 70 years, the biggest advances have come from leveraging computation. That idea has clearly played out in practice.

So this is a wonderful time to do software in a different way.

At Hexmos, we call ourselves builders of semi-autonomous agents. In a way, we are building agents that help build other agents. That is our focus.

If AI Writes More Code, What Becomes the Human Job?

Practically speaking, most of us are using tools like GitHub Copilot, Cursor, Claude Code, OpenCode, or similar systems. We are all generating large amounts of code now, and that means we are constantly dealing with code we do not fully understand ourselves.

There is simply too much being generated for manual checking.

So more and more of software development is going to revolve around two pillars.

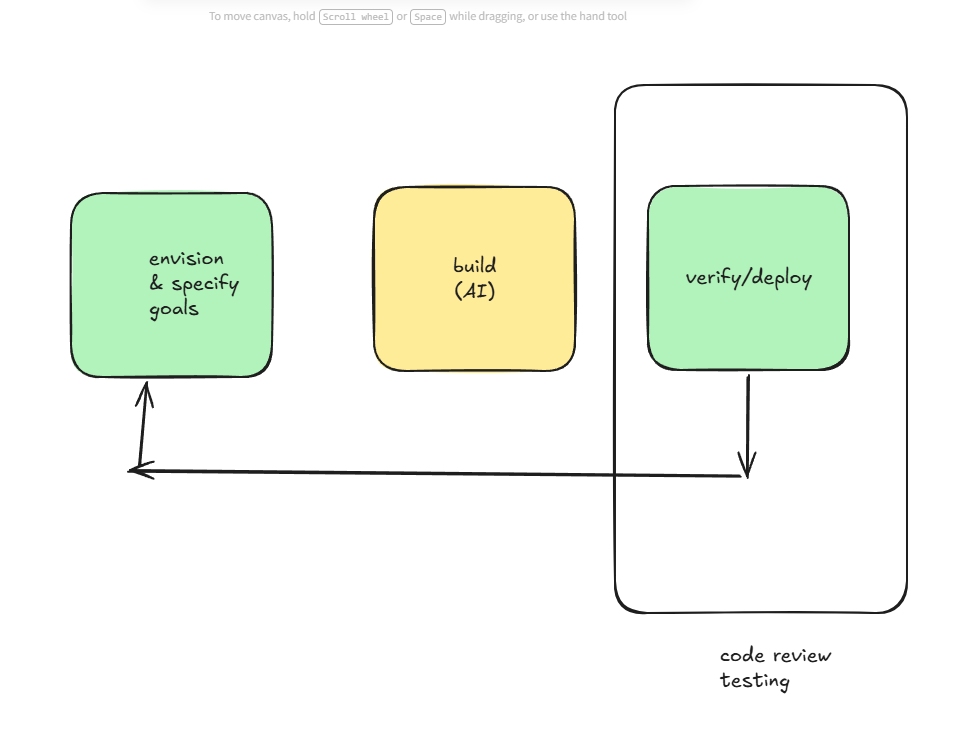

Originally, we had three pillars:

- Envisioning goals and specifying requirements

- Building software

- Deploying software, observing feedback from the world, tests, and production systems, and then improving the system

Now with AI, the middle part — the actual implementation work — is becoming increasingly automated.

So envisioning and specification are still largely human responsibilities. And I think we want to keep it that way. As people like Herb Simon have argued, we want to design environments the way humans want them, not the way machines want them. We are building these systems for ourselves.

And then there is verification.

Specifying goals and checking whether things were done correctly is increasingly becoming the human responsibility.

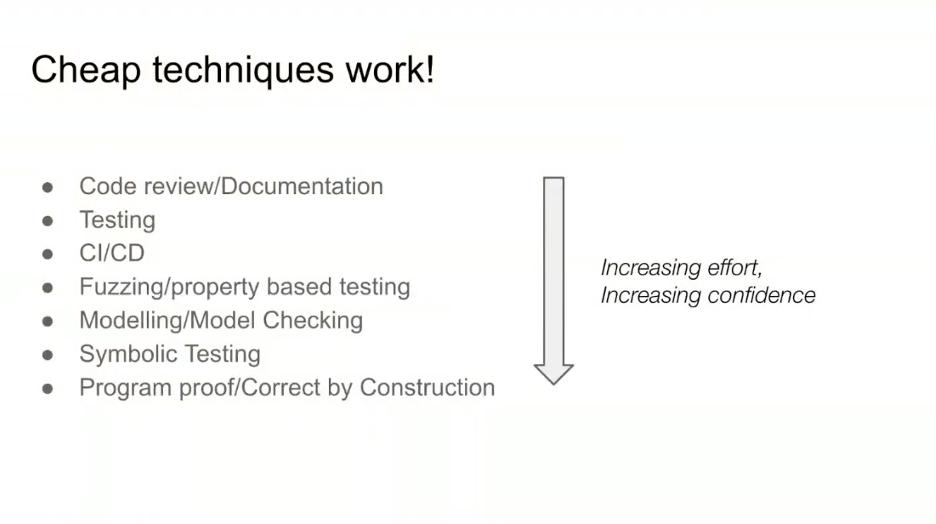

Historically, verification in software engineering has mainly been based on relatively lightweight techniques:

- Code review

- Documentation

- Testing

- CI/CD

- Fuzzing

- Property-based testing

- Formal verification

src: What works and doesn't selling formal methods

The cheapest and most common method has been code review. You simply ask a senior engineer to look at the code.

But now the volume has exploded because machines are generating code continuously. You may generate thousands of lines of code in a single day.

Why Traditional Code Review Breaks When Code Volume Explodes

So how do you verify all of that?

We need more review, and we need better methods for doing it.

To solve this, I came up with what I believe is a comparatively new technique: git-lrc.

The idea is micro code reviews.

The Core Idea: Review Code at the Exact Moment It Matters Most

What do I mean by “micro”?

All of us already use git commit. Whether you are using Copilot, Cursor, Claude Code, OpenCode, or something else, eventually you are storing code somewhere and creating commits.

That is a universal workflow shared by tens of millions of developers.

So I thought: why not place the review step exactly there?

Committing is already the point where you are ready to create a snapshot. So why not review the snapshot before it gets finalized?

That became the core insight.

And now we are able to execute it properly.

Another important aspect is understanding what the machine is generating. Sometimes AI systems make terrible architectural decisions. Sometimes they introduce ugly patterns, expensive cloud operations, sensitive data leaks, behavioral regressions, or security issues.

They may:

- Introduce expensive infrastructure operations

- Expose sensitive data

- Change behavior unintentionally

- Corrupt important logic

- Leak credentials

So bad things can happen.

That is why we want lightweight review systems that help produce more stable software while reducing bugs and operational issues.

Of course, there are more rigorous techniques like formal specification and formal verification. But most software does not require that level of rigor.

Often, we simply want a fast and cheap way to sanity-check generated code.

That is what micro review is about.

It is an extremely cheap verification method that catches issues early.

Another important aspect is comprehension. As you commit changes, the system helps you understand what is happening.

What the Workflow Feels Like in Practice

Here is what that looks like in practice.

Imagine I intentionally add some obviously bad code to a small Go program. I stage the change the normal way and start a commit. Instead of letting the commit go through immediately, git-lrc opens a review window and begins analyzing the staged diff in real time. The important point is that the review happens at the exact moment I am about to preserve the change, so I do not need to remember a separate workflow or open a different tool.

Within roughly 13 seconds, the first result appears. The system flags part of the change as suspicious and says that the lines look random and likely not intended for production use. That kind of feedback matters because it catches the sort of low-quality AI output that is easy to miss when you are moving quickly. Rather than forcing me to inspect every line from scratch, the review immediately tells me where to focus my attention.

The interface then generates short visual summaries of the change and walks through them step by step. As I move through the review, it explains that a new Go package called main has been introduced, that standard boilerplate was added, and that some of the comments look like placeholders or unfinished experimental code. It also points me directly to the affected file, main.go, so I can jump straight from the review into the source.

What makes this useful is that the tool does not stop at description. It also explains why the change may be risky. In this example, the review highlights that unfinished code can reduce stability and can create refactoring work later. So the system is doing two jobs at once: it is identifying a possible defect, and it is helping me understand the nature and impact of the change I just made.

From there, I have a clear next step. I can let the tool attempt an automatic fix in the background, or I can pass the issue to another coding assistant such as Copilot or Claude and ask it to repair the specific problem. In the demo, Copilot recognizes the placeholder text and removes it.

That is the core value proposition. In less than a minute, I get a review, a concise explanation of what changed, a warning about what might go wrong, and a straightforward path to fixing the issue.

In practice, the system acts as both:

- A lightweight verification layer

- A comprehension aid for AI-generated code

That is the essence of the micro review methodology.

Why This Tiny Review Step Can Improve Reliability and Revenue

And I believe this can significantly improve code quality across engineering teams.

Code quality directly impacts:

- Reliability

- Uptime

- Customer experience

- Revenue retention

- Operational stability

Does the software stay available at night while engineers are asleep?

Does it behave as expected?

Does it annoy users?

Does it create churn or revenue loss?

Generated code must be checked, and we need cheap ways to do it continuously.

This gives companies a way to improve engineering standards with very little effort.

The overhead is tiny — often less than a minute per commit — but the quality gains can be substantial.

So hopefully this has been useful.

If this sounds interesting, go to Hexmos git-lrc.

From there, you can install it and try it out.

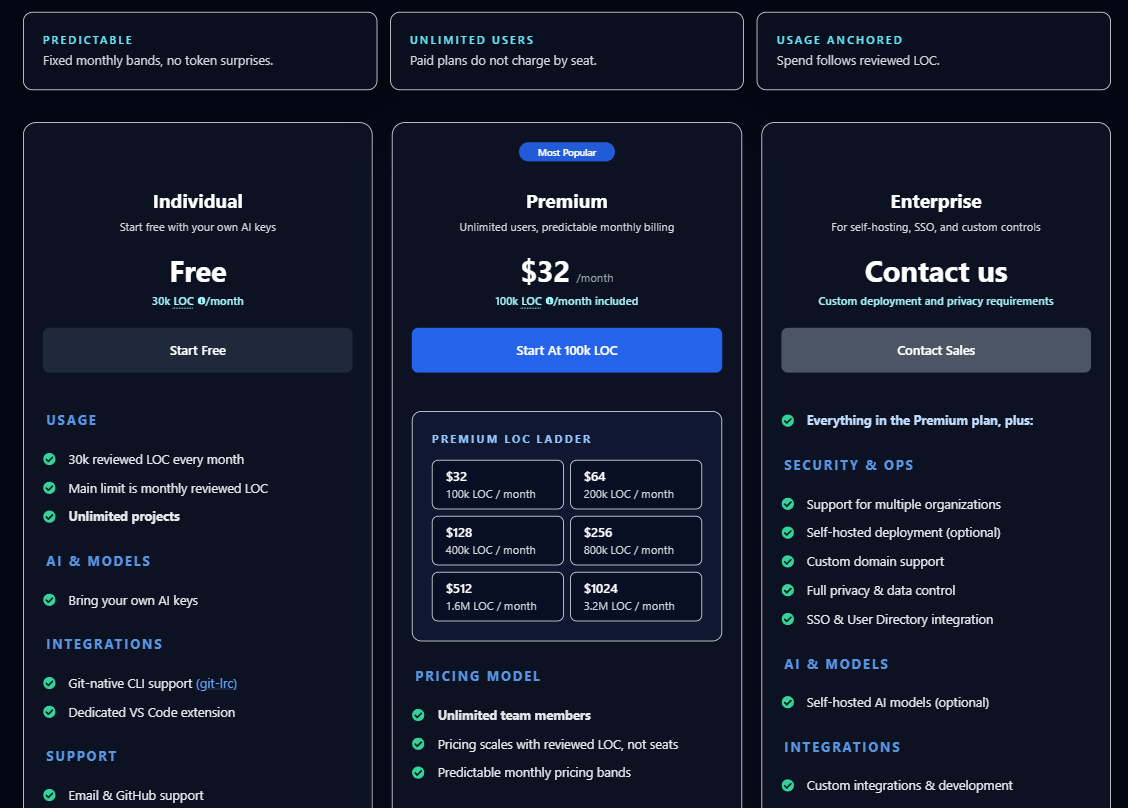

Why the Pricing Is Meant to Stay Predictable

Let me also briefly explain the pricing.

We charge based on lines of code scanned.

You can have unlimited users and unlimited team members, but usage is priced predictably based on scanning volume.

For example:

- $32 for 100,000 lines

- $64 for 200,000 lines

- $128 for 400,000 lines

- $256 for 800,000 lines

Both price and volume scale proportionally.

The important point is that there are no surprise bills.

AI usage is included in the pricing, so you do not need to separately pay for models.

You can onboard your entire team — whether you are a startup, small business, or larger company.

We also offer enterprise plans for organizations that need custom deployments or integrations.

But generally, most teams can simply start with the standard paid plans.

There is also a free tier with around 30,000 lines of code included, so you can try it out before committing.

So give it a try, explore it, and see whether this style of lightweight review improves your workflow.