Responsible Design: Shaping Large-Scale Consequences by Organizing Agents

A reflection on AI accountability, individual responsibility, and why careful design is the only viable way to shape outcomes in complex agentic systems.

You can watch the video version of the post as well if you prefer that:

Recently on LinkedIn, I saw a comment that I thought was really thought-provoking and interesting.

This comment was made by Mr. Meheryar Tata. He's a CTO and also has a financial background. I think he was a CA as well.

So, let's get into it.

What he said is that AI, by itself, cannot have accountability structurally because it has nothing to lose. Only humans and corporations can be accountable because a broken promise has economic consequences. In the long term, this means that the only remaining job will be risk underwriting. You will receive a premium to be held accountable when things go south. Basically, insurance.

This is an extremely thought-provoking comment, in my opinion.

Because what he's essentially saying is that semi-autonomous systems will do most of the jobs of the future.

Driving a car? AI will do it. Balancing the books? AI will do it. Teaching? AI will do it. Surgery? AI will do it. Arguing for justice? AI will do it. Everything — AI will do it.

So, what does the human do?

Well, a human or a corporation guarantees something, and if things go wrong, someone must be held accountable so that bad consequences have an equal and proportionate response.

As progress happens, I think we also increasingly wish for security guarantees across all kinds of human activity.

That was his view.

Now let's go a little further. I don't know whether all of you know what this is about, but this is a picture of Hammurabi.

You know Hammurabi's code. Hammurabi was a famous Babylonian king historically, and he came up with some of the very first laws.

Most of these were essentially "if this, then that" kinds of laws. Even in India, we had people like Chanakya. In China, people like Han Fei. Different civilizations were developing legal systems. The whole idea was that things go wrong in society all the time, and the question becomes: how do we deal with it?

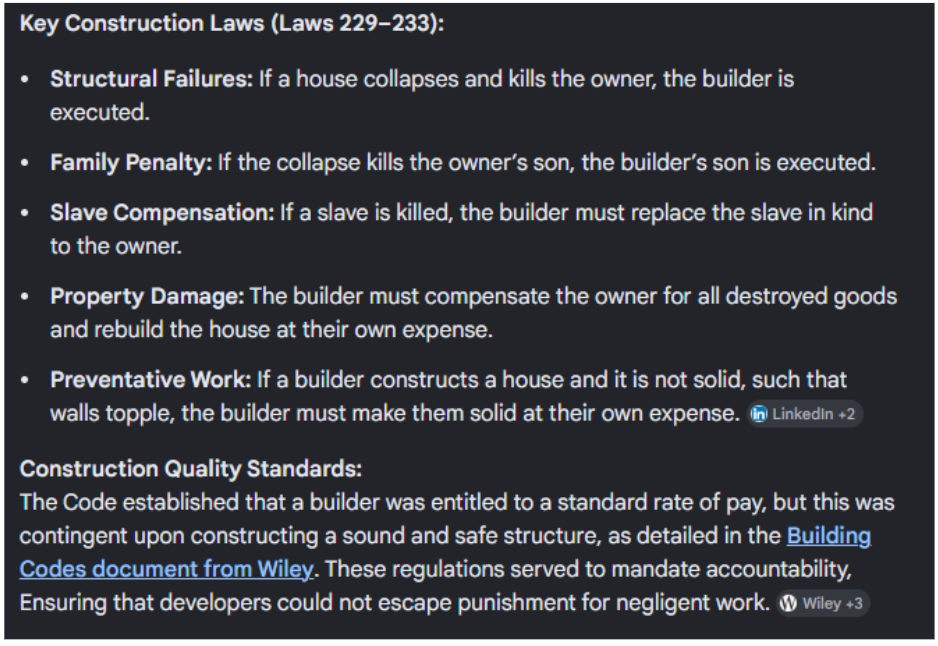

For example, he had laws dealing with structural failure. If a house collapses and kills the owner, then the builder must be executed. The builder therefore has a reason to be careful and design things properly.

Or if there is damage to the owner's son, then the builder's son is also executed.

These kinds of systems were about compensation, damage prevention, deterrence, and quality standards.

There are several components to this, and I think it's a very interesting perspective.

So this is where Mr. Meheryar is coming from. This is the underlying view: how do you deter dangerous activities in society?

Now we'll move forward in history and look at another way of thinking about this.

There was someone called Admiral Rickover. He was the person who introduced the idea of nuclear submarines and made them practical.

During his time, this was almost seen as impossible because the Manhattan Project was going on, and atomic energy was associated almost entirely with bombs. So it was considered a very tricky thing to even imagine converting atomic energy into productive use.

He was taking it into national defense through nuclear submarines, and he made it safe. He made an extremely dangerous and new technology safe.

How did he do that?

His primary concept was responsibility.

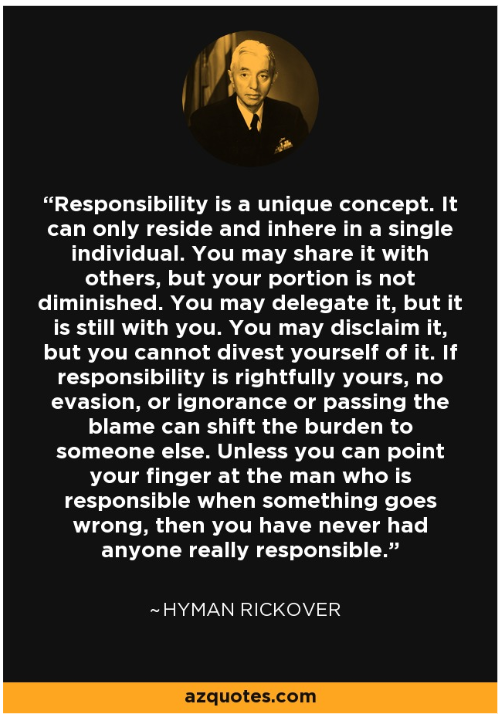

Here is his quote on responsibility.

What he said is:

Responsibility is a unique concept. It can only reside and inhere in a single individual.

Look at the wording here.

Mr. Meheryar said that accountability can reside in a human or a corporation.

But Rickover is even harsher. He's saying only the individual can truly bear the consequence because, even inside an organization, someone must ultimately be held responsible.

People like Elon Musk insist that there must be an actual person's name behind every requirement.

You cannot hide behind an organization because, at the end of the day, one person is responsible for a particular thing.

You may share responsibility with others, but your own responsibility is not diminished.

It's a wonderful quote.

You can say ten people are on your team and that they contributed to a bad outcome, but the point is that all ten of you are still responsible.

That is the idea of responsibility he brings forward. Each person is responsible for the whole. You may delegate execution, but responsibility still remains with you.

Delegation means someone else may execute, but you are still accountable.

You may disclaim responsibility, but you cannot divest yourself of it. You cannot escape it.

You cannot divide it, pass the buck, or say "I didn't know."

You are responsible.

So when something goes wrong, there has to be one person.

And as they say, if everyone is responsible, then probably no one is.

So this is another perspective on responsibility.

From the AI angle, this is something we have to think deeply about.

How do we make people responsible for systems they do not even understand?

We cannot fully predict what AI systems will do.

Even at the societal level, we cannot predict what every individual will do, and yet governments still say they will provide justice.

That is the whole idea of the state. Even in highly complex situations, where taking responsibility seems impossible, we still try to figure out a way to take responsibility.

This is another person: Herbert Simon.

Herbert Simon wrote a very important book called The Sciences of the Artificial.

I think this is also extremely important.

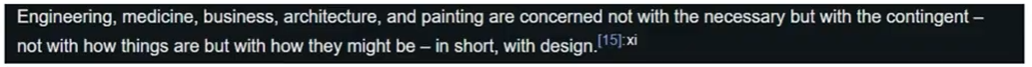

What he said is that engineering, medicine, business, architecture, painting, and many other fields are concerned not with the necessary, but with the contingent — not with how things are, but with how they might be. In short, they are concerned with design.

This whole book, The Sciences of the Artificial, is essentially another way of talking about the science of design.

Most modern disciplines are fundamentally about design. Engineering is about design. Medicine is about design. Business is about design. Architecture is about design.

It is all about shaping outcomes using intelligence, resources, capabilities, creativity, focus, and scholarship.

Using everything available to shape consequences to the best of our ability.

Making things happen the way we want them to happen.

That is what design is about.

And what Simon said is that most modern jobs are fundamentally about design.

Design is a first-class intellectual discipline. It is not decoration. It is about taking existing situations and transforming them into preferred outcomes.

This applies to architecture, policy, organizations, UI, economics — everything.

It is fundamentally about consequences.

And again, Simon brings in this perspective of complexity.

The relation of program to environment opens up an exceedingly important role for computer simulation as a tool for achieving a deeper understanding of human behavior.

Back then, we could not understand humans at a very micro level. This was before AI.

For it is the organization of components and not their physical properties that largely determine behavior. And if computers are organized somewhat in the image of man, then the computer becomes an obvious device for exploring the consequences of alternative organizational assumptions for human behavior.

Essentially, what he is saying is that each AI agent is a component, and now we are going to organize these agents into coherent systems.

We must organize them in such a way that harm does not befall humanity.

We already do this with potentially dangerous systems. For example, we have armies, but armies are placed under civilian control. Even if there is a coup attempt, there are mechanisms to restore order.

The idea is that we use intelligent organizational structures to keep dangerous power under control in the way we want.

The entire system is designed so that outcomes align with our intentions.

That is what Simon is saying.

So how does all this relate back to Mr. Meheryar?

Insurance is one thing. Deterrence is one thing.

But in the modern view, we must take responsibility for the outcomes of complex systems.

And how do we do that?

We do it through design.

We have to design systems carefully. We have to think about new organizational structures. We have to put things together in sensible ways so that harm is minimized and benefits are maximized.

That, I hope, gives you an idea of how I think about AI and how to shape the future with AI.